Human Bottlenecks

On the era of the centaur and playing the middle

I wanted to write this post when moltbook happened, but travel was crazy and my daughter fell ill. I tried again one evening this week, but I had a headache and was just craving to rest my mind for a while, rather than stretch it endlessly. So instead, I went to bed and watched Bridgerton (how dare you, Benedict).

While I am a somewhat disciplined person, writing well is not something I manage to discipline myself into yet. It requires the electric rush of being in the zone.

Finally, I watched the release of Opus 4.6, Claude’s AI model, just weeks after the release of Opus 4.5 and the release of OpenAI’s Codex 5.3 - astounding progress over a very short period of time. That was the trigger that pushed me to my keyboard.

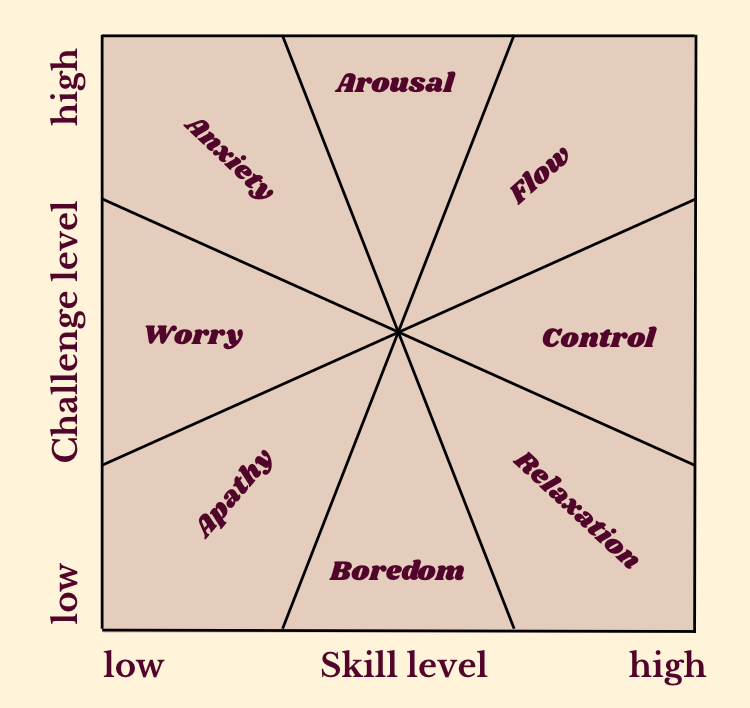

The concept of flow

The psychologist Mihaly Csikszentmihalyi became famous for recognising and naming the electric energy that got me writing, now widely known under the concept of flow. It is a state of intense focus and complete absorption in an activity, resulting in high enjoyment and loss of self-consciousness. Time rushes by and creativity and output are positively bursting from you. Flow emerges when we apply our abilities to worthy challenges, feeling like a masterful rider galloping across a vast terrain.

But flow has shadow states. Apathy emerges when both challenge and skill are low. We become disengaged and unmotivated. Anxiety strikes when challenges exceed our skills. We feel overwhelmed and stressed.

Arousal sits between flow and anxiety: we are stimulated, stretched slightly beyond our comfort zone, in a productive state for learning.

These states don’t just describe the preconditions for my writing, they also map the psychological terrain of AI adoption surprisingly well. AI adoption increasingly pushes knowledge work into alternating flow and anxiety, because humans are becoming the limiting factor in scaling output.

AI agents can drastically increase our productivity. The instant feedback loop creates a wide corridor of flow for people adopting the newest agentic coding models. Ideas can be built, tested, and iterated on with astonishing speed. In flow, this shows up as adrenaline and creative energy. Work stops feeling like work.

The internet is now awash with people sharing statements like these about working with coding agents: “I have NEVER worked this hard, nor had this much fun with work.”

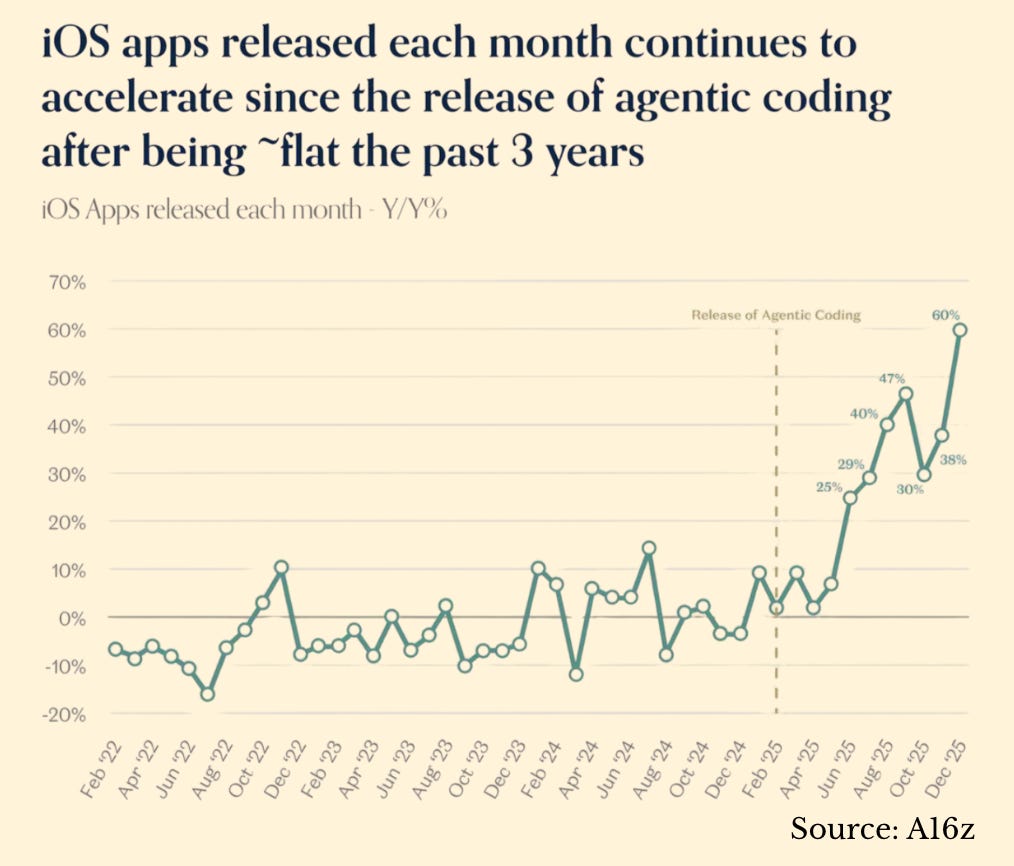

There is a reason people flock to hackathons like rock concerts and that the App store is seeing a vast increase in the number of newly released apps. There is a drug-like rush to building things that previously only existed in your head. It is addictive.

Each time a new model gets released, we re-enter an arousal state. We explore its capabilities with excitement but also uncertainty. How does this map against our existing skills? What new patterns must we learn?

As one developer on X aptly summarised:

Good enough to be economically useful

Not good enough to replace me

Perfect vibe zone.

But more and more, I also catch myself feeling a distinctly modern kind of anxiety. My balance gets rattled by each new model release. I feel permanently uprooted, fearing I will fall behind. Many leading developers and builders now report similar feelings, from never having felt this much behind, being filled with wonder and profound sadness, to nearly every software engineer experiencing some kind of mental health crisis.

At the frontier of AI adoption, flow and anxiety exist in equal measure. Strong AI adoption requires the agility to keep immersing ourselves in accelerating change, but immense flow is the reward we get in return.

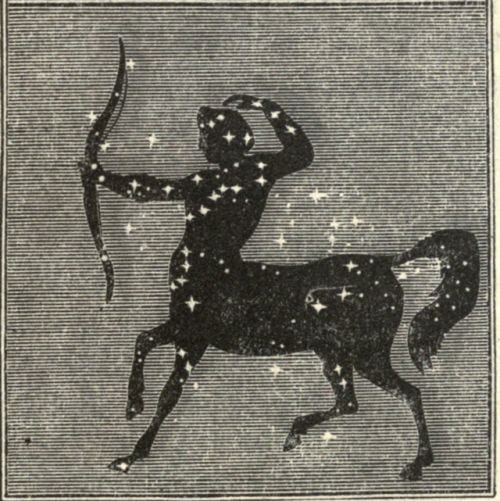

The glorious but transient era of the centaur

The partnership between humans and AI agents has ushered in the era of the centaur - named after the mythical half-man, half-horse being - where people and machines collaborate as copilots, each amplifying the other's strengths and compensating for the other’s weakness.

But with the rapid progress of AI models and agentic coding, we can now start to discern what looms on the horizon:

roon is a prominent AI researcher and member of the technical staff at OpenAI. He believes the age of centaurs will end soon.

Corporations that are purely AI and robotics will vastly outperform any corporations that have people in the loop. Computer used to be a job that humans had. You would go and get a job as a computer where you would do calculations. They’d have entire skyscrapers full of humans, 20-30 floors of humans, just doing calculations. Now, that entire skyscraper of humans doing calculations can be replaced by a laptop with a spreadsheet.

That spreadsheet can do vastly more calculations than an entire building full of human computers. You can think, “okay, what if only some of the cells in your spreadsheet were calculated by humans?” Actually, that would be much worse than if all of the cells in your spreadsheet were calculated by the computer. Really what will happen is that the pure AI, pure robotics corporations or collectives will far outperform any corporations that have humans in the loop. And this will happen very quickly.

Both of their logic is simple: As models continue to progress, there will come a point when it no longer makes sense for us to work with AI. We will be too slow and too tired. We just want to swoon over Benedict Bridgerton. We will be too human. We will therefore become bottlenecks. A spreadsheet vastly outperforms a building full of human computers. Mixing human calculations into a spreadsheet would make it worse, not better.

I share their conviction. In the face of rapid model progress, I believe swarms of agents will at some point far exceed human programmer expertise and output. The idea that humans, in any domain accessible to AI models, would be anything but bottlenecks strikes me as wishful thinking, a desire to preserve our importance when the reality is that keeping up will at some point prove cognitively impossible.

The output we can produce will grow to an extent that is difficult to imagine today. What will we be able to build when we have systems that can vastly surpass human capabilities? Whatever it is, some time in the future, when we look back at that previous era of purely human programming, we will feel the same awe we feel standing before the pyramids today: unable to believe humans ever built such things without our current tools.

The trajectory is clear for anyone willing to read the exponential (Metr). Human cognitive superiority looks increasingly like a historical artefact. We have operated at roughly the same cognitive capacity for 50,000 years, while AI capabilities double every seven months (AI Digest).

The era of the centaur is as glorious as it is transient. It gives us the ability to build exceptional things, to give form to ideas that lie buried in our heads, just waiting to be created. But with every model update, with every increase in capability, we must also grapple with slowly saying goodbye to our role as the essential ingredient in producing economically valuable output for this world.

Diverging paths

This acceleration feels dizzying. I believe that if you don’t yet feel at least somewhat disoriented, you may not be tracking the frontier correctly. A sense of disorientation has become the new normal.

Some will jump into the AI adoption wave headfirst, training themselves to master fleets of agents. Early playbooks for managing agents at scale are beginning to emerge (e.g. here and here). For those accustomed to 60+-hour work weeks and juggling multiple work streams, this transition will feel somewhat natural. The future demands the mental fitness of an athlete, but then again the economy already thrives on a high-performance mental elite.

But the majority of people do not fall into this camp. They get paralysed by the anxiety of perpetual catch-up, feeling overwhelmed at the mere thought of working harder to not fall behind. Many lack the mental stamina for constant context-switching. At some point, exhaustion wins.

This dynamic will likely produce severe productivity gaps between those who find flow in the ever-more-powerful AI frontier and those held captive by their anxiety.

The fault lines

The divide between AI-haves and have-nots is less obvious than we might expect.

Young people face a paradox. They have the mental flexibility to immerse themselves fully in AI, and they often outpace older peers on speed of iteration and tool fluency. Yet they lack the domain expertise that turns that speed into direction. What should the agents build? Which problems are worth solving? What context actually matters? Without real-world context and taste, managing agents becomes a fruitless exercise.

At the same time, AI offers young people an extraordinary advantage: the ability to produce output that rivals seasoned experts. A 20-year-old with strong agentic coding skills and basic domain knowledge can now outpace the productivity of a 45-year-old industry veteran.

This creates distinct experiences of the AI transition:

Young workers live in perpetual arousal. Each new model feels like a level playing field. They have no legacy skills to defend and no established expertise being devalued. Every capability release is an opportunity to close the gap with their seniors. The constant state of not-quite-mastery feels productive, maybe even exhilarating. They are stretched but not overwhelmed.

Experienced workers oscillate between flow and anxiety. When they successfully integrate AI into their expertise, they achieve flow at a higher level than ever before. Their accumulated knowledge guides the agents. They feel masterful.

But each model update threatens to make what they know obsolete. The anxiety creeps in: Am I still needed? Is my expertise still valuable? Can I keep pace with those who started from scratch?

The convergence

Both paths ultimately lead to the same destination. The young worker’s perpetual arousal cannot be sustained indefinitely. Eventually, the gap between human learning speed and AI capability growth becomes unbridgeable. The experienced worker’s flow state cannot be maintained as AI systems become too complex, too autonomous and too fast to meaningfully direct.

But for now, the era of the centaur offers both groups a window of exceptional productivity.

A way forward: Grounded, playful, running

How do we navigate the era of the centaur and the transition towards our cognitive displacement?

I would suggest a three-part strategy: grounding ourselves in our humanity, leaning into playful exploration, and embracing that we must run faster than ever before.

First: staying grounded. I have written elsewhere about becoming unfuckwithable in the age of AI. The essence is focusing on creativity, intrinsic motivation, adaptability, and human groundedness. This means spending time in nature, inhabiting our bodies, exploring the physical world, connecting with friends and family, and sharing rituals that create identity and belonging.

When we ground ourselves in our humanity, we avoid tying our sense of self too closely to our labour and output. Without that grounding, we become tense and anxious, feeling struggle and stress in the face of constant disruption caused by AI, rather than curiosity and wonder. A deeper sense of self-worth keeps us stable when the ground permanently shifts beneath our feet.

Second: staying playful. Those grounded in their humanity can embrace the playfulness that comes with AI. Play is the most powerful exploration tool for this world. It is how mammals explore novel and complex environments with high uncertainty. Neuroscience shows that play activates the brain's reward circuits while reducing threat perception, allowing us to engage with new tools without triggering anxiety responses. Studies of "neoteny" (the retention of juvenile traits) even show that our extended capacity for play is linked to our exceptional adaptability over thousands of years.

Play with the most advanced AI models. Today, that means Claude Code, Cowork and OpenAI Codex. If you are stuck in basic chat mode, take a few days off from work and explore. Build a game. Interactively visualise a story. Create a silly dream tracker that generates recipe ideas based on your most recent lucid adventures. Do whatever feels interesting to you.

Playfulness reduces distance to the AI frontier and reminds us that mastery can feel like joyful exploration rather than dreary obligation.

Third: Running faster. Groundedness and playfulness give us energy for what AI adoption actually demands: keeping pace with accelerating technology. Whatever intensity and speed felt sufficient in the past must now be turned up several notches.

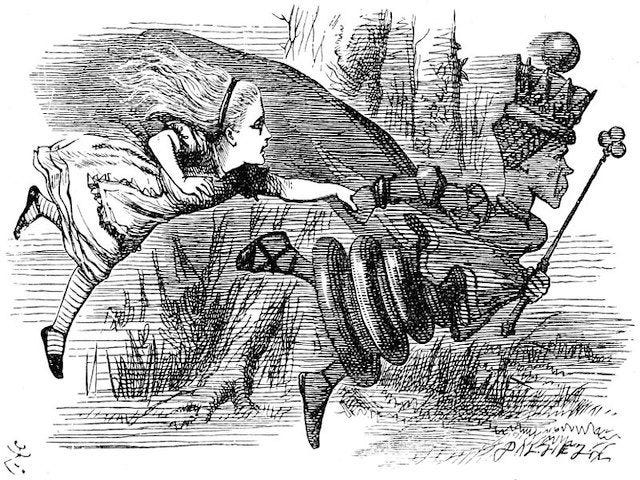

We are entering the Red Queen’s Race from Alice in Wonderland. In the story, Alice runs constantly but remains in the same spot.

“Well, in our country,” said Alice, still panting a little, “you’d generally get to somewhere else—if you run very fast for a long time, as we’ve been doing.”

“A slow sort of country!” said the Queen. “Now, here, you see, it takes all the running you can do, to keep in the same place. If you want to get somewhere else, you must run at least twice as fast as that!”

There is no beating around the bush: the stories of AI coming to do all of our work while we get to relax are fairy tales. At least until the era of the centaur is over. AI demands more rather than less from us, until a point in time at which it demands nothing from us.

Those who are able to turn it up a notch must do so. This goes for individuals as much as companies, countries and continents: AI adoption and AI leadership requires an immense effort to not fall behind. It requires superhuman effort to get ahead.

I know that not everyone will be able to accelerate their pace. Many people can’t or won’t, and I hope we can continue to build a society that nonetheless works for everyone.

Playing the middle

The centaur era feels glorious because we remain useful, at least in narrow economic terms. We run twice as fast just to keep up and for some this feels energising, even addictive, as their capabilities grow and they find flow states with AI.

But even in a state of constant flow and perpetual running, we can now start to see what lies ahead: at some point, AI will outpace us entirely.

Yet seeing the endgame does not mean we have arrived at it.

As Will Manidis, the founder of ScienceIO, writes:

“We have confused simulating an endgame with arriving at one. These are not the same thing. The ability to model the terminal state of AI does not mean the terminal state of AI is close. The middle is still where the complications live, where the position is ambiguous and the thing no one modelled happens and you have to play the board as it is.

The middle is two thousand years long and counting and we are somewhere in it and the eschaton is not ours alone to force.”

Manidis is pointing at a mistake we keep making: we extrapolate capability curves, but we live inside adoption curves. The middle game may last far longer than pure exponential curves suggest. AI develops rapidly but diffuses through our world at the pace humans enable it to - and humans change slowly. The centaur era may therefore extend for decades, not years.

The era of human cognitive dominance is ending. We can see its terminus now, even if we have not yet reached it. That knowledge shapes how we experience the present and shows up with heightened emotions: flow, rush, anxiety and sorrow.

Yet if we assume the middle game may be long, we have time to shape how it unfolds. The years ahead will be disruptive, exciting and uncertain. There is no stopping that. But they are still ours to navigate.

By playing the middle and by taking our agency in it seriously, we are preparing for the end so that it need not be one.

We can help more people thrive in the centaur era. We can explore playfully and work hard on finding flow with AI. We can build systems that support us when apathy and anxiety hit. We can support each other through sorrow and disorientation. We can ensure that when the centaur era ends, we face it with our humanity intact rather than already exhausted.

There is a children’s song many of us grew up with called “Going on a Bear Hunt.” When the children encounter a river or mud, they chant:

Can’t go over it. Can’t go under it. Oh no! Got to go through it!

We cannot close our eyes and return to how things were. The only way forward is through the middle game.

Despite all the disorientation, I take comfort in knowing that by going through it, we all go through together.

Hi Judith, I think this is a terrific piece, it really reasonated with me. Keep writing, you have a wonderful gift to give a voice to some of the swelling anxieties that we all grapple with as we navigate this momentous transition.

"AI adoption and AI leadership requires an immense effort to not fall behind. It requires superhuman effort to get ahead." You've really distilled something for me, Judith. The subtle danger of AI acceleration isn't just that we feel like we're falling behind. It's that it takes away a feeling of progress, one of the deepest sources of human motivation.